一、pod控制器基本概念1.1Pod控制器及其功用

Pod控制器,又称之为工作负载(workload),是用于实现管理pod的中间层,确保pod资源符合预期的状态,pod的资源出现故障时,会尝试进行重启,当根据重启策略无效,则会重新新建pod的资源。

1.2pod控制器有多种类型

1、ReplicaSet: 代用户创建指定数量的pod副本,确保pod副本数量符合预期状态,并且支持滚动式自动扩容和缩容功能。

ReplicaSet主要三个组件组成:

- 用户期望的pod副本数量

- 标签选择器,判断哪个pod归自己管理

- 当现存的pod数量不足,会根据pod资源模板进行新建

帮助用户管理无状态的pod资源,精确反应用户定义的目标数量,但是RelicaSet不是直接使用的控制器,而是使用Deployment。

2、Deployment:工作在ReplicaSet之上,用于管理无状态应用,目前来说最好的控制器。支持滚动更新和回滚功能,还提供声明式配置。

ReplicaSet 与Deployment 这两个资源对象逐步替换之前RC的作用。

3、DaemonSet:用于确保集群中的每一个节点只运行特定的pod副本,通常用于实现系统级后台任务。比如ELK服务

特性:服务是无状态的

服务必须是守护进程

4、StatefulSet:管理有状态应用

5、Job:只要完成就立即退出,不需要重启或重建

6、Cronjob:周期性任务控制,不需要持续后台运行

1.3pod与控制器之间关系

controllers:在集群上管理和运行容器的 pod 对象, pod 通过 label-selector 相关联。

Pod 通过控制器实现应用的运维,如伸缩,升级等。

二、Deployment(无状态应用部署:web)2.1Deployment作用

部署无状态应用

管理Pod和ReplicaSet

具有上线部署、副本设定、滚动升级、回滚等功能

提供声明式更新,例如只更新一个新的image

应用场景:web服务

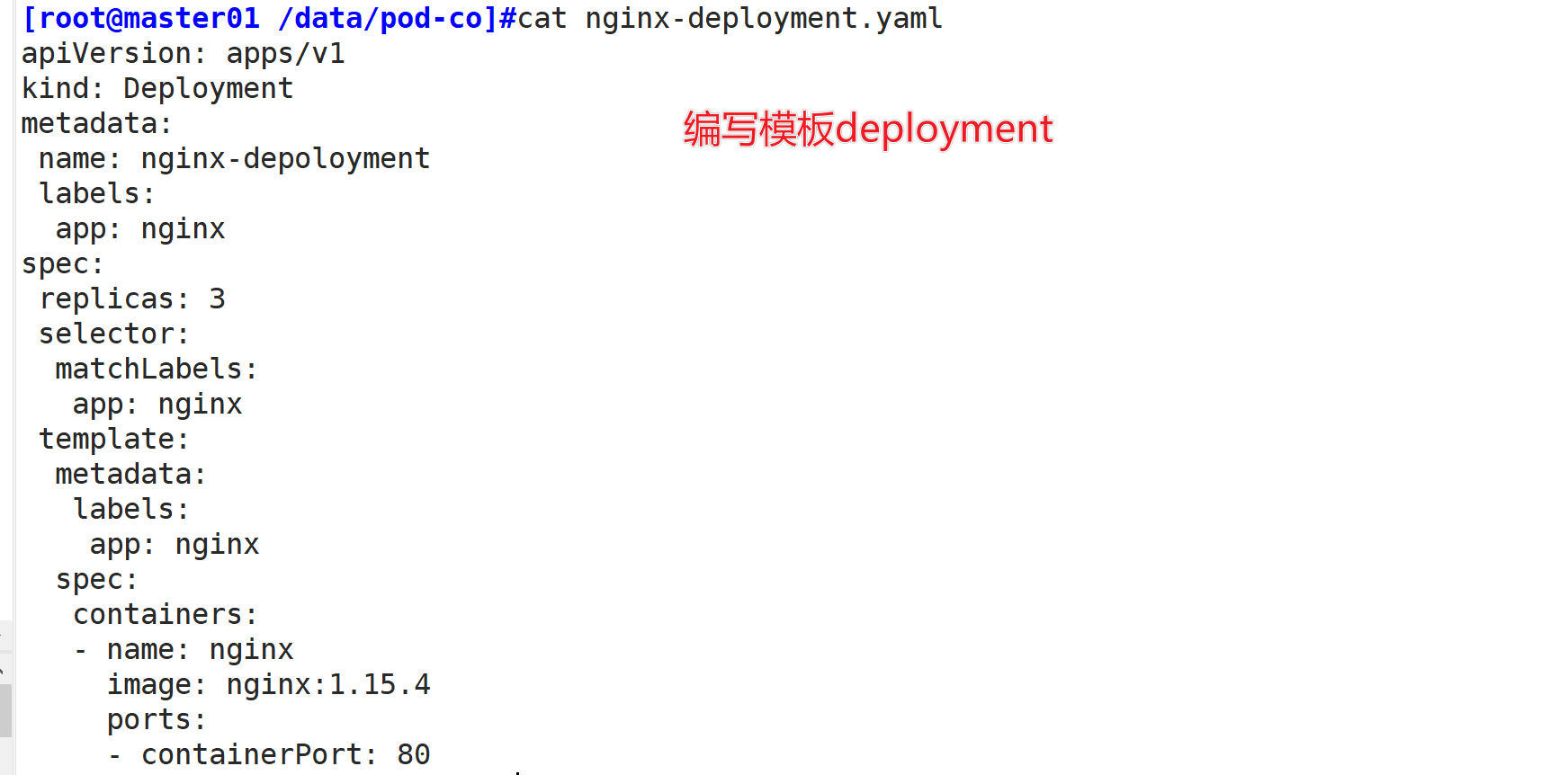

2.2案例

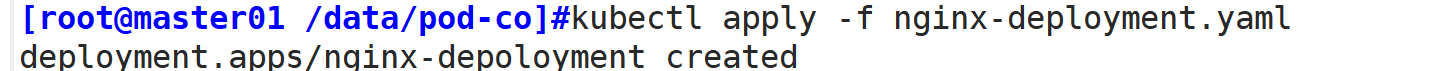

vim nginx-deployment.yamlapiVersion: apps/v1kind: Deploymentmetadata: name: nginx-deployment labels: app: nginx spec: replicas: 3 selector: matchLabels: app: nginx template: metadata: labels: app: nginx spec: containers: - name: nginx image: nginx:1.15.4 ports: - containerPort: 80kubectl create -f nginx-deployment.yamlkubectl get pods,deploy,rs

1.编写模板

2.创建资源

3.查看pod情况

//查看控制器配置

kubectl edit deployment/nginx-deploymentapiVersion: apps/v1kind: Deploymentmetadata: annotations: deployment.kubernetes.io/revision: "1" creationTimestamp: "2021-04-19T08:13:50Z" generation: 1 labels: app: nginx #Deployment资源的标签 name: nginx-deployment namespace: default resourceVersion: "167208" selfLink: /apis/extensions/v1beta1/namespaces/default/deployments/nginx-deployment uid: d9d3fef9-20d2-4196-95fb-0e21e65af24aspec: progressDeadlineSeconds: 600 replicas: 3 #期望的pod数量,默认是1 revisionHistoryLimit: 10 selector: matchLabels: app: nginx strategy: rollingUpdate: maxSurge: 25% #升级过程中会先启动的新Pod的数量不超过期望的Pod数量的25%,也可以是一个绝对值 maxUnavailable: 25% #升级过程中在新的Pod启动好后销毁的旧Pod的数量不超过期望的Pod数量的25%,也可以是一个绝对值 type: RollingUpdate #滚动升级 template: metadata: creationTimestamp: null labels: app: nginx #Pod副本关联的标签 spec: containers: - image: nginx:1.15.4 #镜像名称 imagePullPolicy: IfNotPresent #镜像拉取策略 name: nginx ports: - containerPort: 80 #容器暴露的监听端口 protocol: TCP resources: {} terminationMessagePath: /dev/termination-log terminationMessagePolicy: File dnsPolicy: ClusterFirst restartPolicy: Always #容器重启策略 schedulerName: default-scheduler securityContext: {} terminationGracePeriodSeconds: 30......

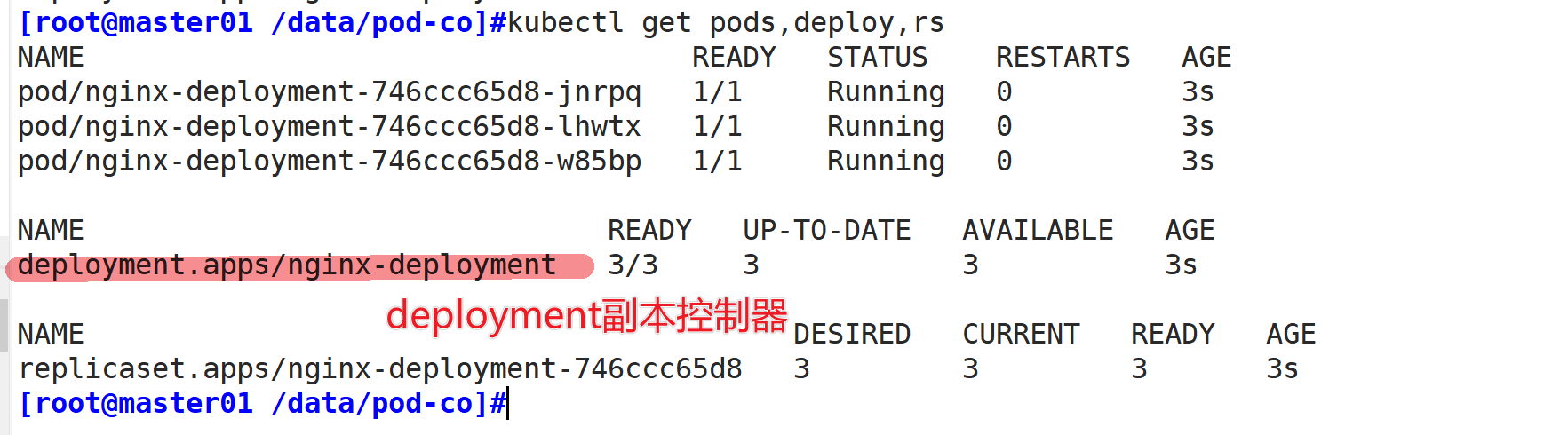

//查看历史版本

kubectl rollout history deployment/nginx-deploymentdeployment.apps/nginx-deploymentREVISION CHANGE-CAUSE1

三、SatefulSet(有状态部署:mysql)3.1satefulset作用

部署有状态应用

稳定的持久化存储,即Pod重新调度后还是能访问到相同的持久化数据,基于PVC来实现

稳定的网络标志,即Pod重新调度后其PodName和HostName不变,基于Headless Service(即没有Cluster IP的Service)来实现

有序部署,有序扩展,即Pod是有顺序的,在部署或者扩展的时候要依据定义的顺序依次进行(即从0到N-1,在下一个Pod运行之前所有之前的Pod必须都是Running和Ready状态),基于init containers来实现

有序收缩,有序删除(即从N-1到0)

3.2应用场景

常见的应用场景:数据库,redis,zookeeper,Kafka

3.3官网

https://kubernetes.io/docs/concepts/workloads/controllers/statefulset/

3.4案例

apiVersion: v1kind: Servicemetadata: name: nginx labels: app: nginxspec: ports: - port: 80 name: web clusterIP: None selector: app: nginx---apiVersion: apps/v1kind: StatefulSetmetadata: name: webspec: selector: matchLabels: app: nginx # has to match .spec.template.metadata.labels serviceName: "nginx" replicas: 3 # by default is 1 template: metadata: labels: app: nginx # has to match .spec.selector.matchLabels spec: terminationGracePeriodSeconds: 10 containers: - name: nginx image: k8s.gcr.io/nginx-slim:0.8 ports: - containerPort: 80 name: web volumeMounts: - name: www mountPath: /usr/share/nginx/html volumeClaimTemplates: - metadata: name: www spec: accessModes: [ "ReadWriteOnce" ] storageClassName: "my-storage-class" resources: requests: storage: 1Gi

3.5StatefulSet组成

从上面的应用场景可以发现,StatefulSet由以下几个部分组成:

●Headless Service(无头服务):用于为Pod资源标识符生成可解析的DNS记录。

●volumeClaimTemplates(存储卷申请模板):基于静态或动态PV供给方式为Pod资源提供专有的固定存储。

●StatefulSet:用于管控Pod资源。

为什么要有headless?

在deployment中,每一个pod是没有名称,是随机字符串,是无序的。而statefulset中是要求有序的,每一个pod的名称必须是固定的。当节点挂了,重建之后的标识符是不变的,每一个节点的节点名称是不能改变的。pod名称是作为pod识别的唯一标识符,必须保证其标识符的稳定并且唯一。

为了实现标识符的稳定,这时候就需要一个headless service 解析直达到pod,还需要给pod配置一个唯一的名称。

为什么要有volumeClainTemplate?

大部分有状态副本集都会用到持久存储,比如分布式系统来说,由于数据是不一样的,每个节点都需要自己专用的存储节点。而在 deployment中pod模板中创建的存储卷是一个共享的存储卷,多个pod使用同一个存储卷,而statefulset定义中的每一个pod都不能使用同一个存储卷,由此基于pod模板创建pod是不适应的,这就需要引入volumeClainTemplate,当在使用statefulset创建pod时,会自动生成一个PVC,从而请求绑定一个PV,从而有自己专用的存储卷。

3.6服务发现

服务发现:就是应用服务之间相互定位的过程。

应用场景:

●动态性强:Pod会飘到别的node节点

●更新发布频繁:互联网思维小步快跑,先实现再优化,老板永远是先上线再慢慢优化,先把idea变成产品挣到钱然后再慢慢一点一点优化

●支持自动伸缩:一来大促,肯定是要扩容多个副本

K8S里服务发现的方式—DNS,使K8S集群能够自动关联Service资源的“名称”和“CLUSTER-IP”,从而达到服务被集群自动发现的目的。

实现K8S里DNS功能的插件:

●skyDNS:Kubernetes 1.3之前的版本

●kubeDNS:Kubernetes 1.3至Kubernetes 1.11

●CoreDNS:Kubernetes 1.11开始至今

3.6.1安装CoreDNS,仅二进制部署环境需要安装CoreDNS

方法一:下载链接:https://github.com/kubernetes/kubernetes/blob/master/cluster/addons/dns/coredns/coredns.yaml.basevim transforms2sed.seds/__DNS__SERVER__/10.0.0.2/gs/__DNS__DOMAIN__/cluster.local/gs/__DNS__MEMORY__LIMIT__/170Mi/gs/__MACHINE_GENERATED_WARNING__/Warning: This is a file generated from the base underscore template file: coredns.yaml.base/gsed -f transforms2sed.sed coredns.yaml.base > coredns.yaml方法二:上传 coredns.yaml 文件kubectl create -f coredns.yamlkubectl get pods -n kube-system

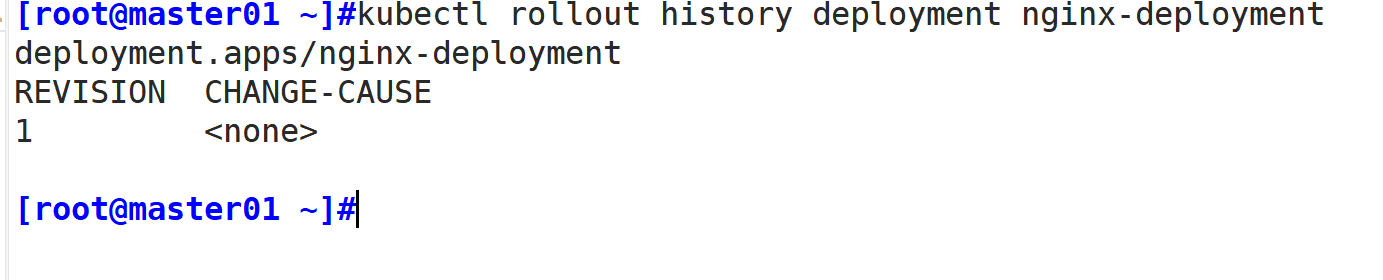

创建svc:

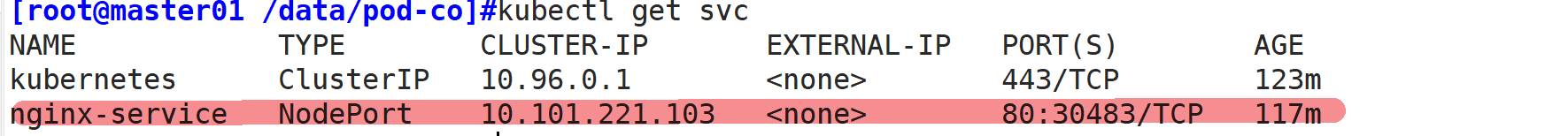

vim nginx-service.yamlapiVersion: v1 kind: Service metadata: name: nginx-service labels: app: nginx spec: type: NodePort ports: - port: 80 targetPort: 80 selector: app: nginxkubectl create -f nginx-service.yamlkubectl get svcNAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGEkubernetes ClusterIP 10.96.0.1 443/TCP 5d19hnginx-service NodePort 10.96.173.115 80:31756/TCP 10s

创建资源:

查看svc资源情况:

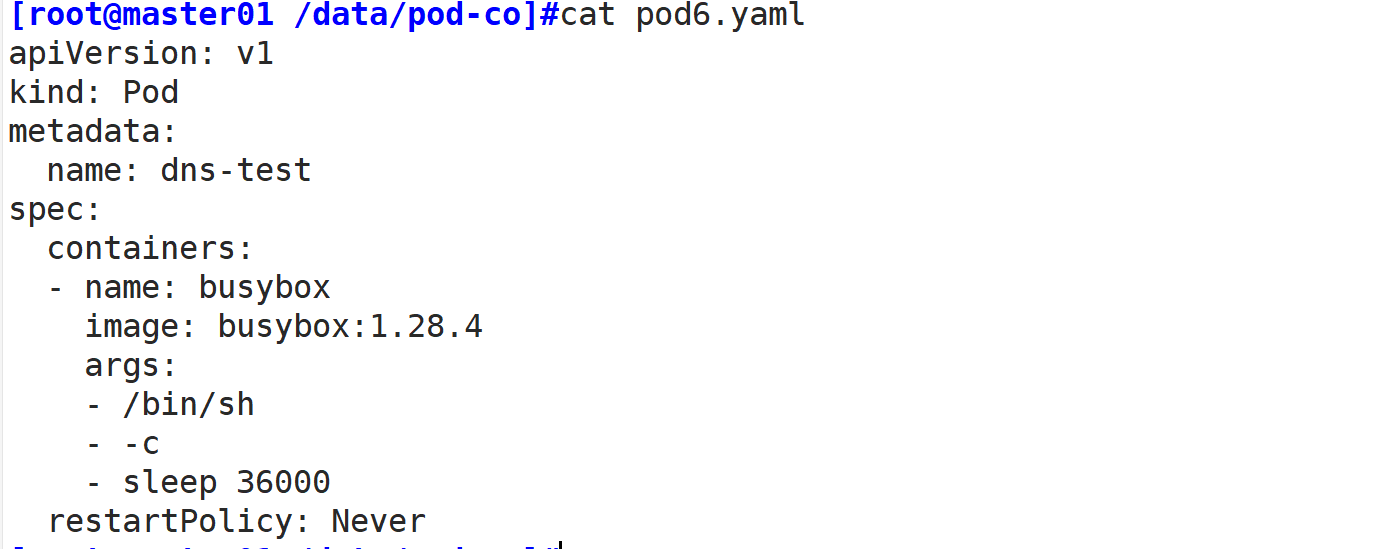

创建dns解析:

vim pod6.yaml apiVersion: v1kind: Podmetadata: name: dns-testspec: containers: - name: busybox image: busybox:1.28.4 args: - /bin/sh - -c - sleep 36000 restartPolicy: Never kubectl create -f pod6.yaml

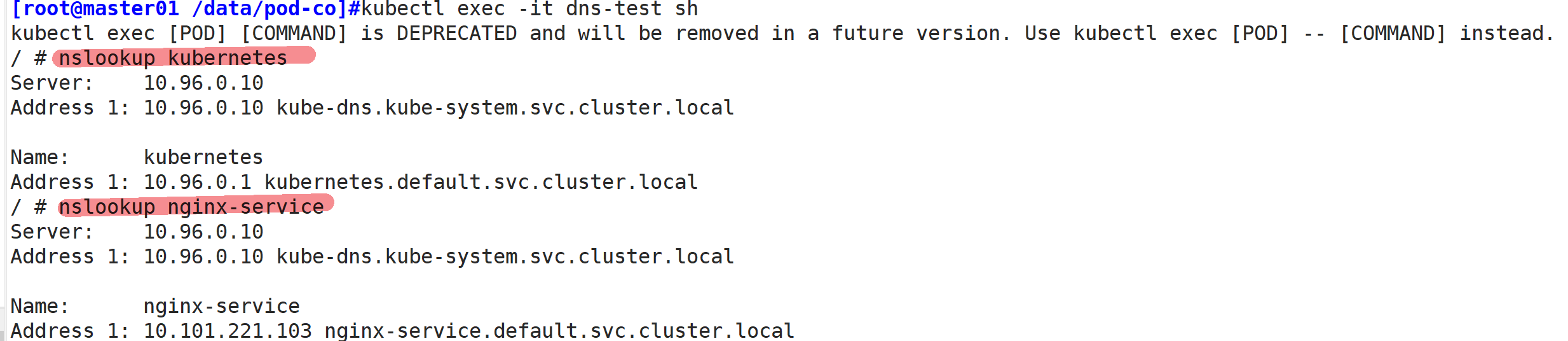

//解析k8s和nginx-service名称

kubectl exec -it dns-test sh/ # nslookup kubernetesServer: 10.96.0.10Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.localName: kubernetesAddress 1: 10.96.0.1 kubernetes.default.svc.cluster.local/ # nslookup nginx-serviceServer: 10.96.0.10Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.localName: nginx-serviceAddress 1: 10.96.173.115 nginx-service.default.svc.cluster.local

//查看statefulset的定义

kubectl explain statefulsetKIND: StatefulSetVERSION: apps/v1DESCRIPTION: StatefulSet represents a set of pods with consistent identities. Identities are defined as: - Network: A single stable DNS and hostname. - Storage: As many VolumeClaims as requested. The StatefulSet guarantees that a given network identity will always map to the same storage identity.FIELDS: apiVersion <string> kind <string> metadata

//清单定义StatefulSet

如上所述,一个完整的 StatefulSet 控制器由一个 Headless Service、一个 StatefulSet 和一个 volumeClaimTemplate 组成。如下资源清单中的定义:

vim stateful-demo.yamlapiVersion: v1kind: Servicemetadata: name: myapp-svc labels: app: myapp-svcspec: ports: - port: 80 name: web clusterIP: None selector: app: myapp-pod---apiVersion: apps/v1kind: StatefulSetmetadata: name: myappspec: serviceName: myapp-svc replicas: 3 selector: matchLabels: app: myapp-pod template: metadata: labels: app: myapp-pod spec: containers: - name: myapp image: ikubernetes/myapp:v1 ports: - containerPort: 80 name: web volumeMounts: - name: myappdata mountPath: /usr/share/nginx/html volumeClaimTemplates: - metadata: name: myappdata annotations: #动态PV创建时,使用annotations在PVC里声明一个StorageClass对象的标识进行关联 volume.beta.kubernetes.io/storage-class: nfs-client-storageclass spec: accessModes: ["ReadWriteOnce"] resources: requests: storage: 2Gi

解析上例:由于 StatefulSet 资源依赖于一个实现存在的 Headless 类型的 Service 资源,所以需要先定义一个名为 myapp-svc 的 Headless Service 资源,用于为关联到每个 Pod 资源创建 DNS 资源记录。接着定义了一个名为 myapp 的 StatefulSet 资源,它通过 Pod 模板创建了 3 个 Pod 资源副本,并基于 volumeClaimTemplates 向前面创建的PV进行了请求大小为 2Gi 的专用存储卷。

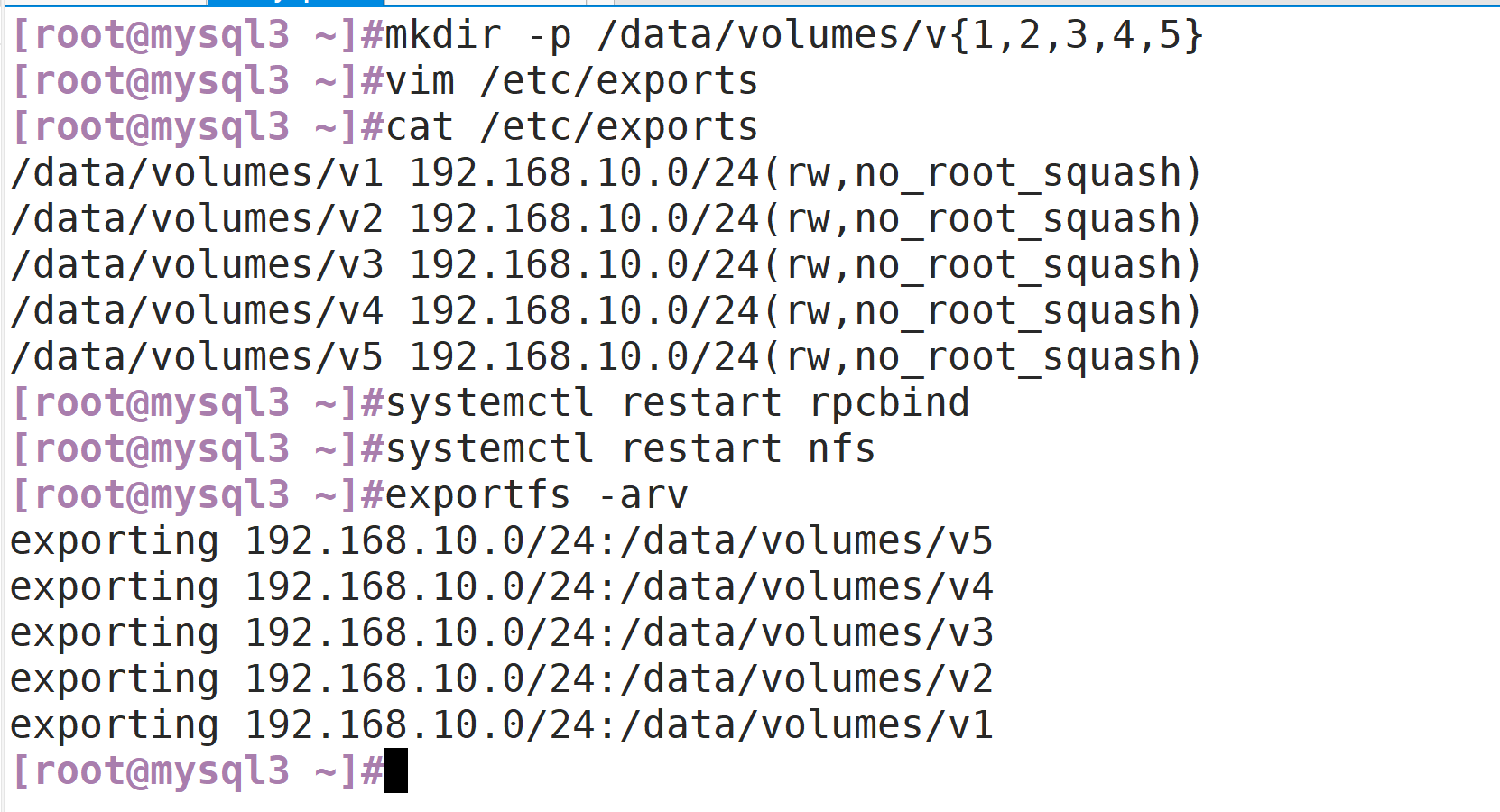

//创建pv

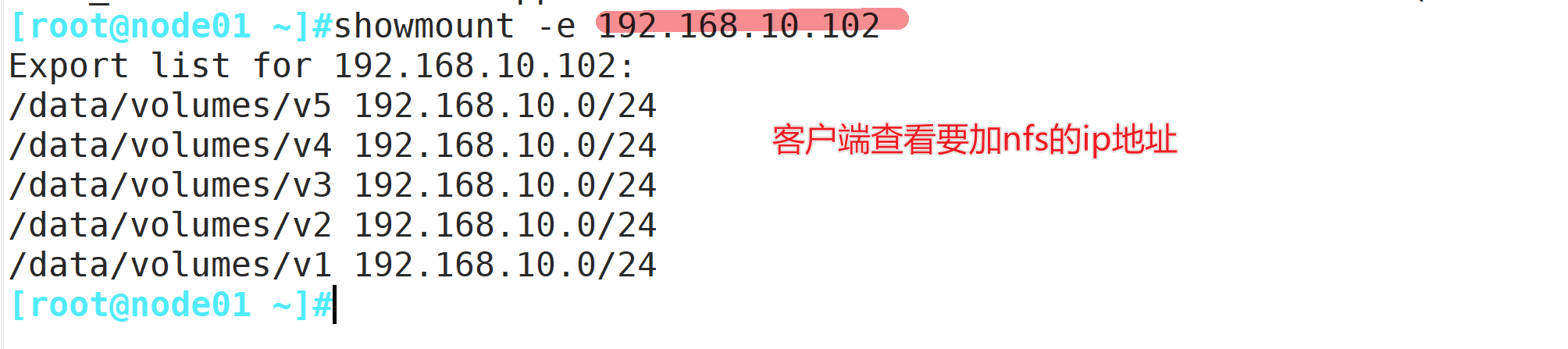

//stor01节点mkdir -p /data/volumes/v{1,2,3,4,5}vim /etc/exports/data/volumes/v1 192.168.10.0/24(rw,no_root_squash)/data/volumes/v2 192.168.10.0/24(rw,no_root_squash)/data/volumes/v3 192.168.10.0/24(rw,no_root_squash)/data/volumes/v4 192.168.10.0/24(rw,no_root_squash)/data/volumes/v5 192.168.10.0/24(rw,no_root_squash)systemctl restart rpcbindsystemctl restart nfsexportfs -arvshowmount -e

node节点查看:

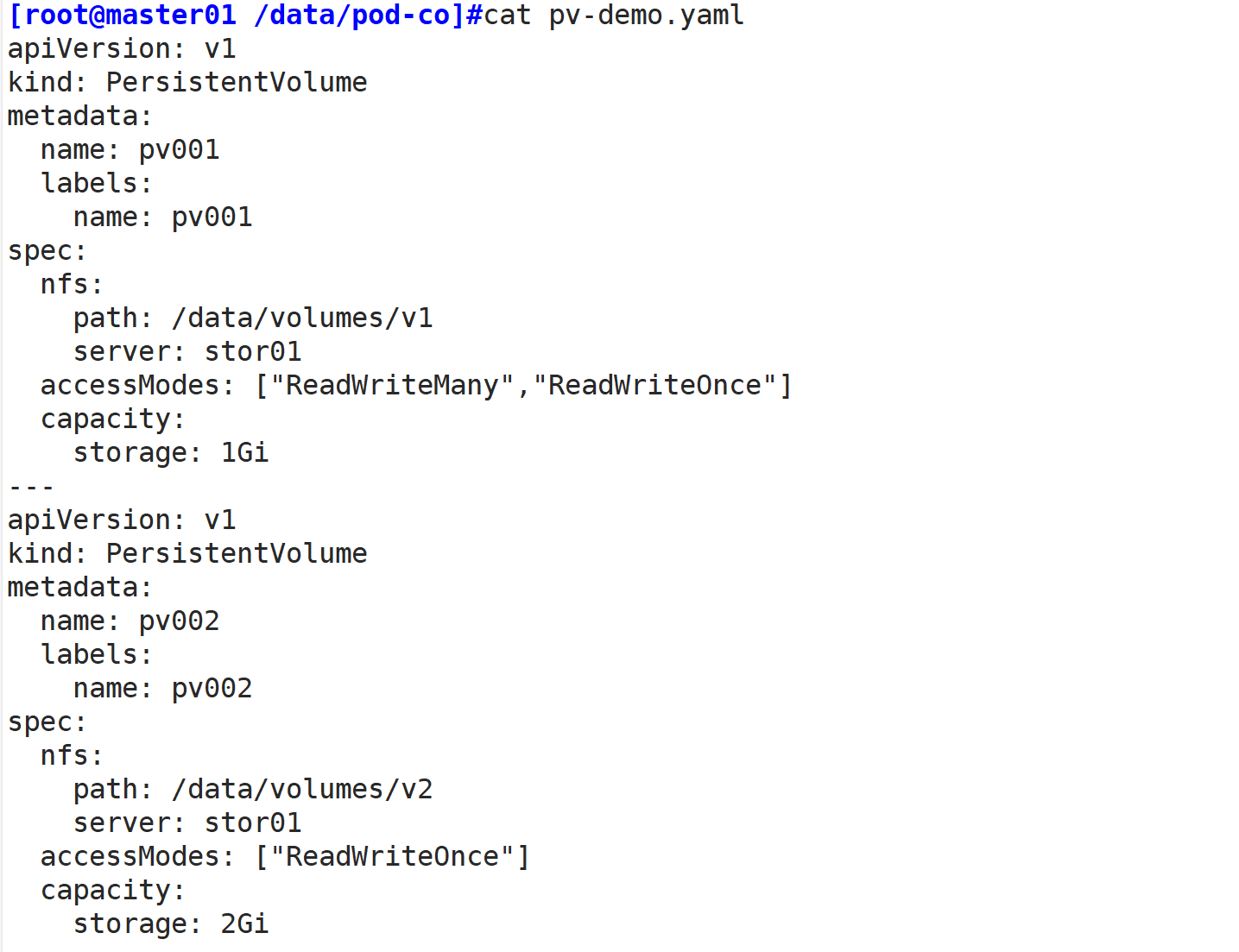

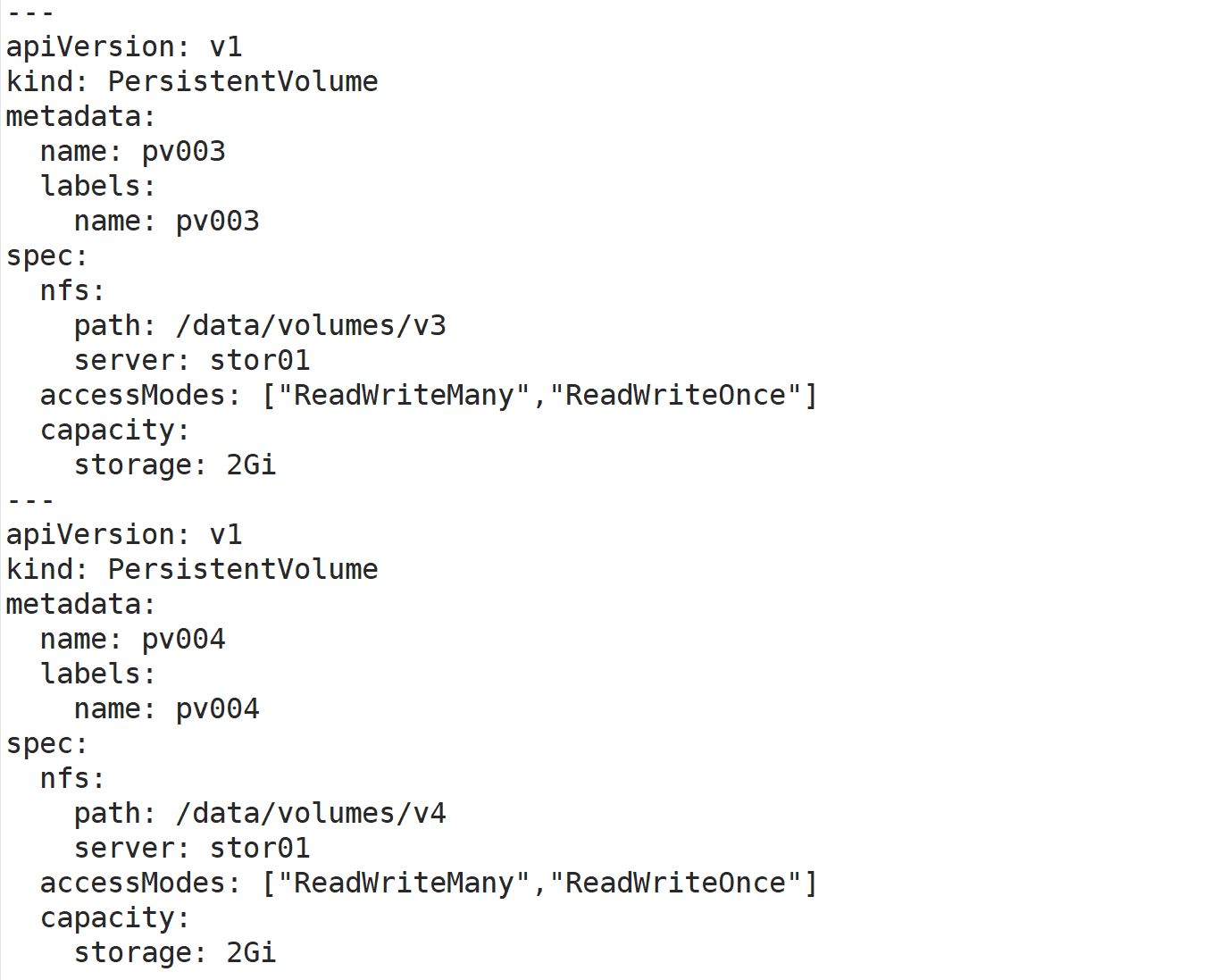

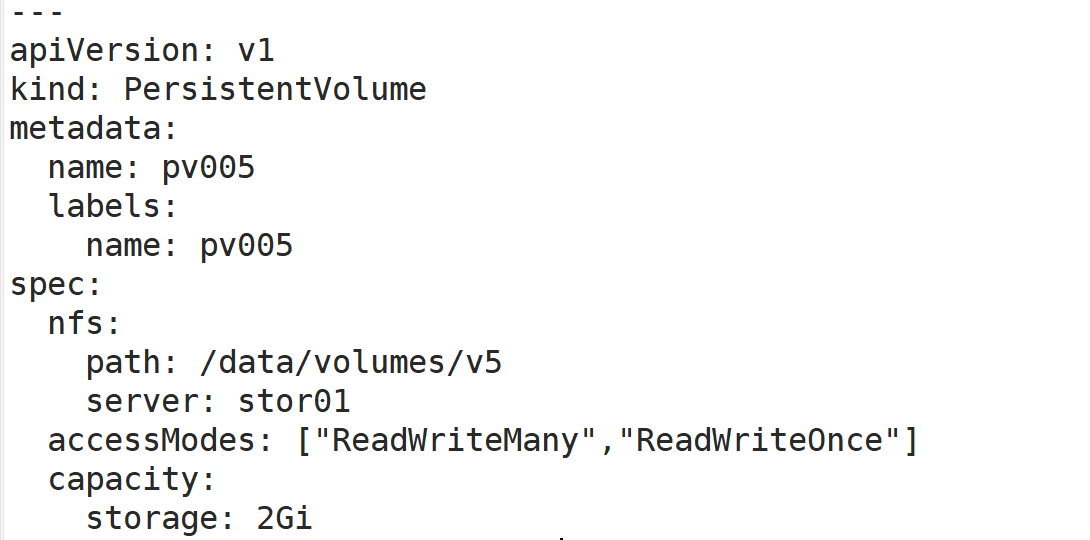

定义pv

vim pv-demo.yamlapiVersion: v1kind: PersistentVolumemetadata: name: pv001 labels: name: pv001spec: nfs: path: /data/volumes/v1 server: stor01 accessModes: ["ReadWriteMany","ReadWriteOnce"] capacity: storage: 1Gi---apiVersion: v1kind: PersistentVolumemetadata: name: pv002 labels: name: pv002spec: nfs: path: /data/volumes/v2 server: stor01 accessModes: ["ReadWriteOnce"] capacity: storage: 2Gi---apiVersion: v1kind: PersistentVolumemetadata: name: pv003 labels: name: pv003spec: nfs: path: /data/volumes/v3 server: stor01 accessModes: ["ReadWriteMany","ReadWriteOnce"] capacity: storage: 2Gi---apiVersion: v1kind: PersistentVolumemetadata: name: pv004 labels: name: pv004spec: nfs: path: /data/volumes/v4 server: stor01 accessModes: ["ReadWriteMany","ReadWriteOnce"] capacity: storage: 2Gi---apiVersion: v1kind: PersistentVolumemetadata: name: pv005 labels: name: pv005spec: nfs: path: /data/volumes/v5 server: stor01 accessModes: ["ReadWriteMany","ReadWriteOnce"] capacity: storage: 2Gikubectl apply -f pv-demo.yamlkubectl get pvNAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGEpv001 1Gi RWO,RWX Retain Available 7spv002 2Gi RWO Retain Available 7spv003 2Gi RWO,RWX Retain Available 7spv004 2Gi RWO,RWX Retain Available 7spv005 2Gi RWO,RWX Retain Available 7s

创建statefulset

vim stateful-demo.yamlapiVersion: v1kind: Servicemetadata: name: myapp-svc labels: app: myapp-svcspec: ports: - port: 80 name: web clusterIP: None selector: app: myapp-pod---apiVersion: apps/v1kind: StatefulSetmetadata: name: myappspec: serviceName: myapp-svc replicas: 3 selector: matchLabels: app: myapp-pod template: metadata: labels: app: myapp-pod spec: containers: - name: myapp image: ikubernetes/myapp:v1 ports: - containerPort: 80 name: web volumeMounts: - name: myappdata mountPath: /usr/share/nginx/html volumeClaimTemplates: - metadata: name: myappdata annotations: #动态PV创建时,使用annotations在PVC里声明一个StorageClass对象的标识进行关联 volume.beta.kubernetes.io/storage-class: nfs-client-storageclass spec: accessModes: ["ReadWriteOnce"] resources: requests: storage: 2Gi kubectl apply -f stateful-demo.yaml kubectl get svc #查看创建的无头服务myapp-svcNAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGEkubernetes ClusterIP 10.96.0.1 443/TCP 50dmyapp-svc ClusterIP None 80/TCP 38skubectl get sts #查看statefulsetNAME DESIRED CURRENT AGEmyapp 3 3 55skubectl get pvc #查看pvc绑定NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGEmyappdata-myapp-0 Bound pv002 2Gi RWO 1mmyappdata-myapp-1 Bound pv003 2Gi RWO,RWX 1mmyappdata-myapp-2 Bound pv004 2Gi RWO,RWX 1mkubectl get pv #查看pv绑定NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGEpv001 1Gi RWO,RWX Retain Available 6mpv002 2Gi RWO Retain Bound default/myappdata-myapp-0 6mpv003 2Gi RWO,RWX Retain Bound default/myappdata-myapp-1 6mpv004 2Gi RWO,RWX Retain Bound default/myappdata-myapp-2 6mpv005 2Gi RWO,RWX Retain Available 6mkubectl get pods #查看Pod信息NAME READY STATUS RESTARTS AGEmyapp-0 1/1 Running 0 2mmyapp-1 1/1 Running 0 2mmyapp-2 1/1 Running 0 2mkubectl delete -f stateful-demo.yaml //当删除的时候是从myapp-2开始进行删除的,关闭是逆向关闭kubectl get pods -w//此时PVC依旧存在的,再重新创建pod时,依旧会重新去绑定原来的pvckubectl apply -f stateful-demo.yamlkubectl get pvcNAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGEmyappdata-myapp-0 Bound pv002 2Gi RWO 5mmyappdata-myapp-1 Bound pv003 2Gi RWO,RWX 5mmyappdata-myapp-2 Bound pv004 2Gi RWO,RWX

//滚动更新

StatefulSet 控制器将在 StatefulSet 中删除并重新创建每个 Pod。它将以与 Pod 终止相同的顺序进行(从最大的序数到最小的序数),每次更新一个 Pod。在更新其前身之前,它将等待正在更新的 Pod 状态变成正在运行并就绪。如下操作的滚动更新是按照2-0的顺序更新。

vim stateful-demo.yaml #修改image版本为v2.....image: ikubernetes/myapp:v2....kubectl apply -f stateful-demo.yamlkubectl get pods -w #查看滚动更新的过程NAME READY STATUS RESTARTS AGEmyapp-0 1/1 Running 0 29smyapp-1 1/1 Running 0 27smyapp-2 0/1 Terminating 0 26smyapp-2 0/1 Terminating 0 30smyapp-2 0/1 Terminating 0 30smyapp-2 0/1 Pending 0 0smyapp-2 0/1 Pending 0 0smyapp-2 0/1 ContainerCreating 0 0smyapp-2 1/1 Running 0 31smyapp-1 1/1 Terminating 0 62smyapp-1 0/1 Terminating 0 63smyapp-1 0/1 Terminating 0 66smyapp-1 0/1 Terminating 0 67smyapp-1 0/1 Pending 0 0smyapp-1 0/1 Pending 0 0smyapp-1 0/1 ContainerCreating 0 0smyapp-1 1/1 Running 0 30smyapp-0 1/1 Terminating 0 99smyapp-0 0/1 Terminating 0 100smyapp-0 0/1 Terminating 0 101smyapp-0 0/1 Terminating 0 101smyapp-0 0/1 Pending 0 0smyapp-0 0/1 Pending 0 0smyapp-0 0/1 ContainerCreating 0 0smyapp-0 1/1 Running 0 1s

//在创建的每一个Pod中,每一个pod自己的名称都是可以被解析的kubectl exec -it myapp-0 /bin/shName: myapp-0.myapp-svc.default.svc.cluster.localAddress 1: 10.244.2.27 myapp-0.myapp-svc.default.svc.cluster.local/ # nslookup myapp-1.myapp-svc.default.svc.cluster.localnslookup: can't resolve '(null)': Name does not resolveName: myapp-1.myapp-svc.default.svc.cluster.localAddress 1: 10.244.1.14 myapp-1.myapp-svc.default.svc.cluster.local/ # nslookup myapp-2.myapp-svc.default.svc.cluster.localnslookup: can't resolve '(null)': Name does not resolveName: myapp-2.myapp-svc.default.svc.cluster.localAddress 1: 10.244.2.26 myapp-2.myapp-svc.default.svc.cluster.local//从上面的解析,我们可以看到在容器当中可以通过对Pod的名称进行解析到ip。其解析的域名格式如下:(pod_name).(service_name).(namespace_name).svc.cluster.local

//总结无状态:

1)deployment 认为所有的pod都是一样的

2)不用考虑顺序的要求

3)不用考虑在哪个node节点上运行

4)可以随意扩容和缩容

有状态:

1)实例之间有差别,每个实例都有自己的独特性,元数据不同,例如etcd,zookeeper

2)实例之间不对等的关系,以及依靠外部存储的应用。

常规service和无头服务区别:

service:一组Pod访问策略,提供cluster-IP群集之间通讯,还提供负载均衡和服务发现。

Headless service:无头服务,不需要cluster-IP,而是直接以DNS记录的方式解析出被代理Pod的IP地址。

示例:

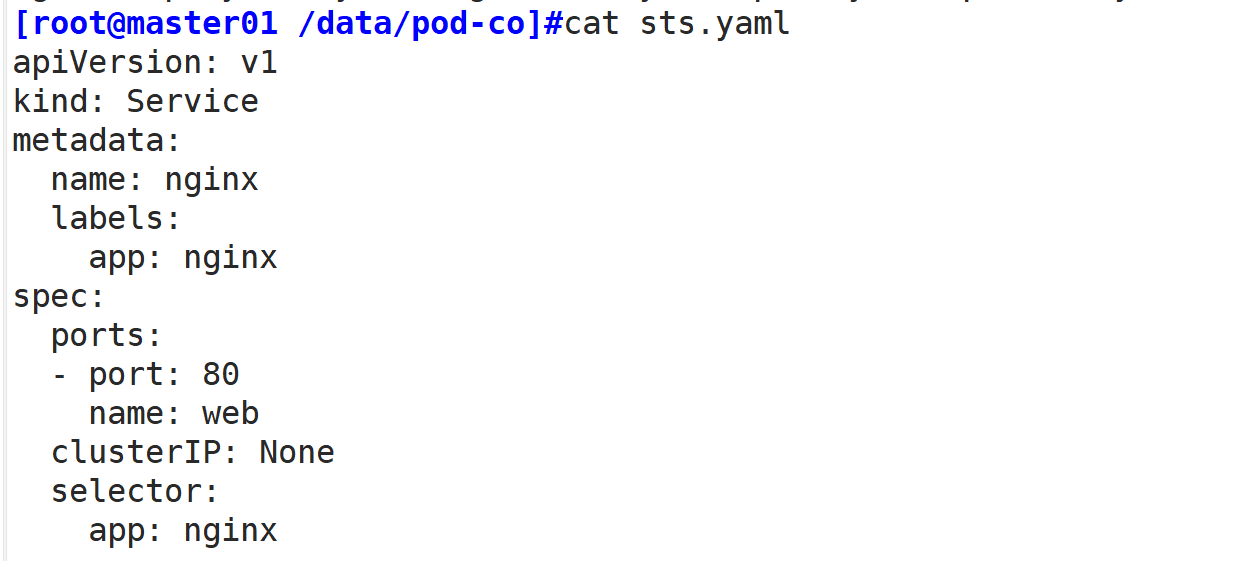

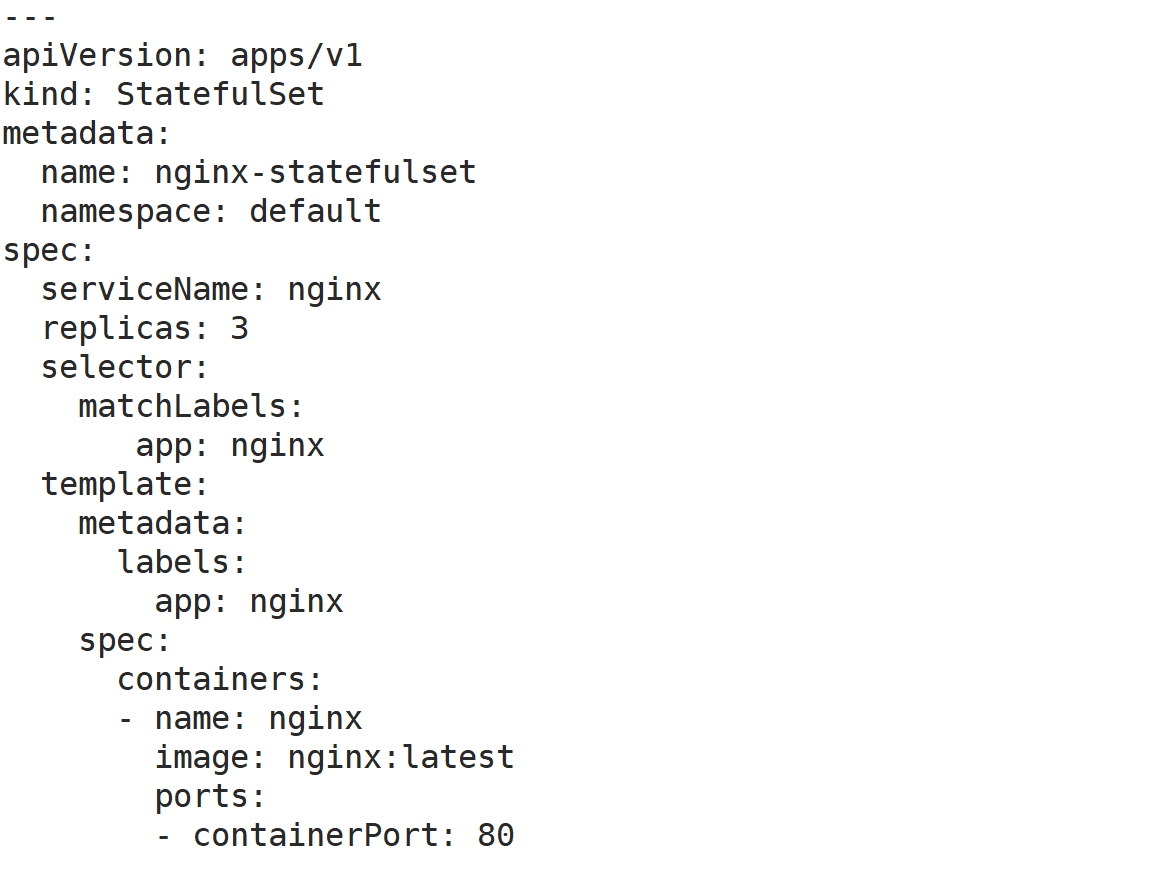

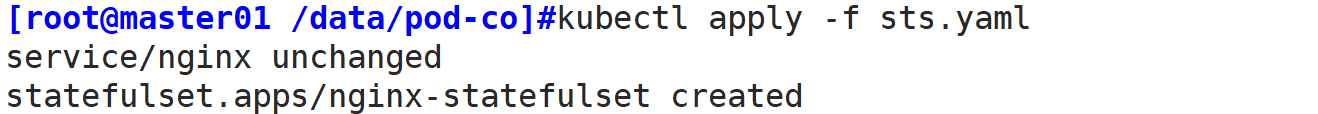

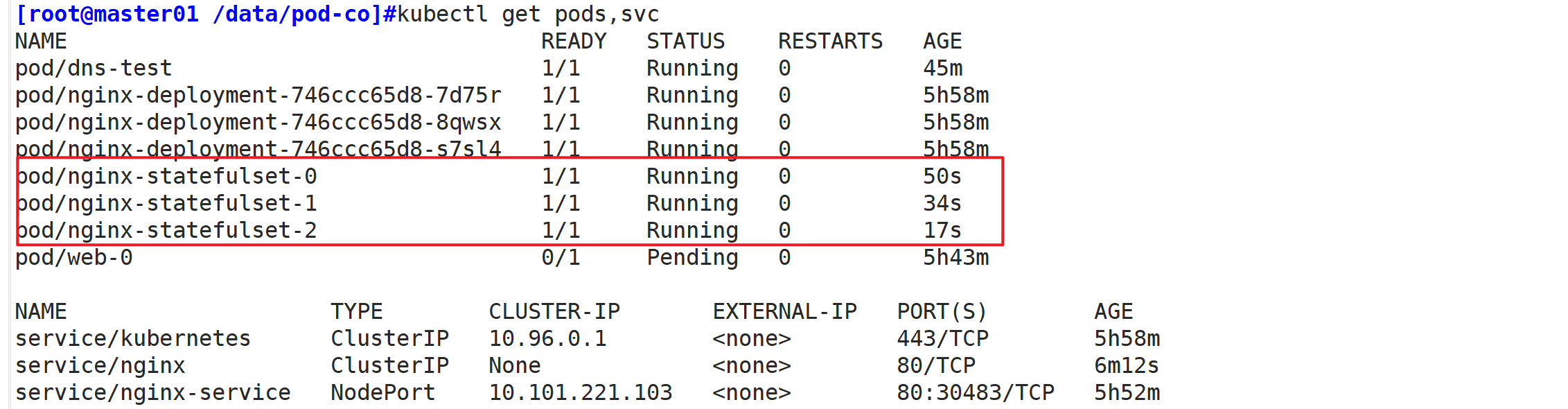

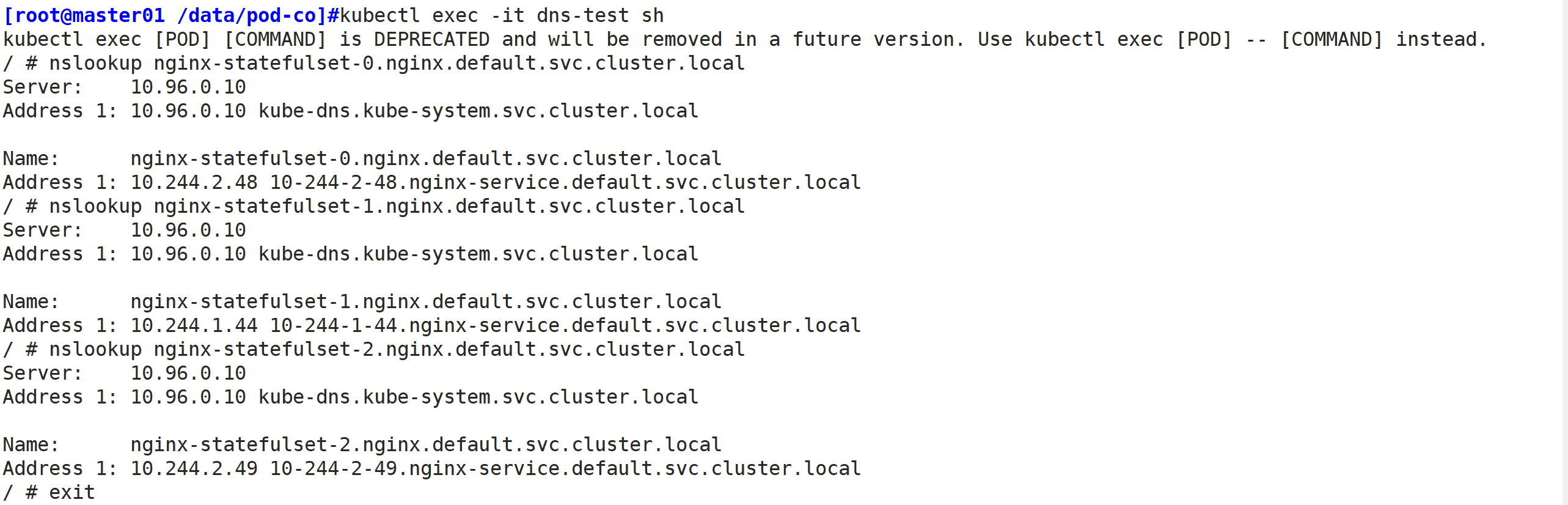

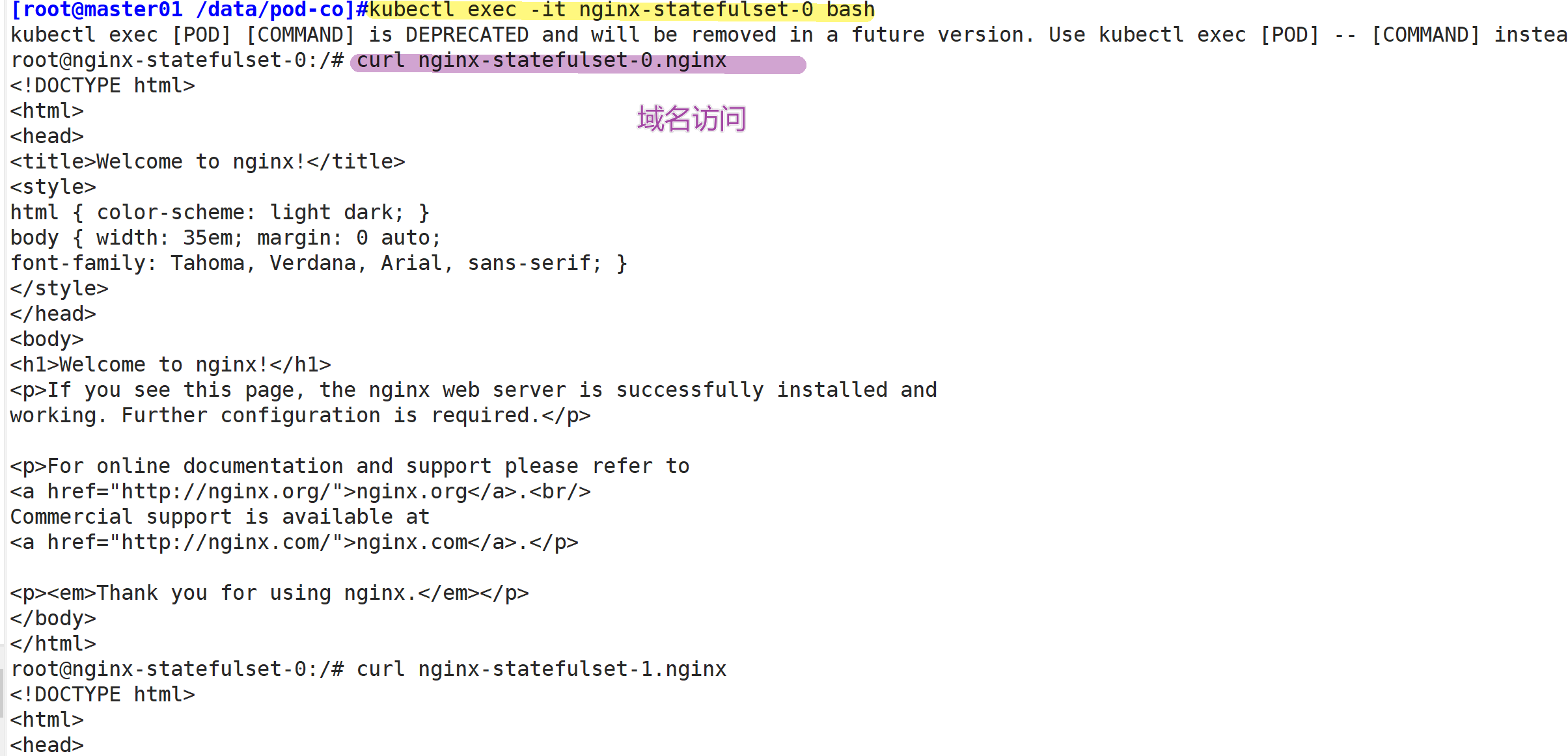

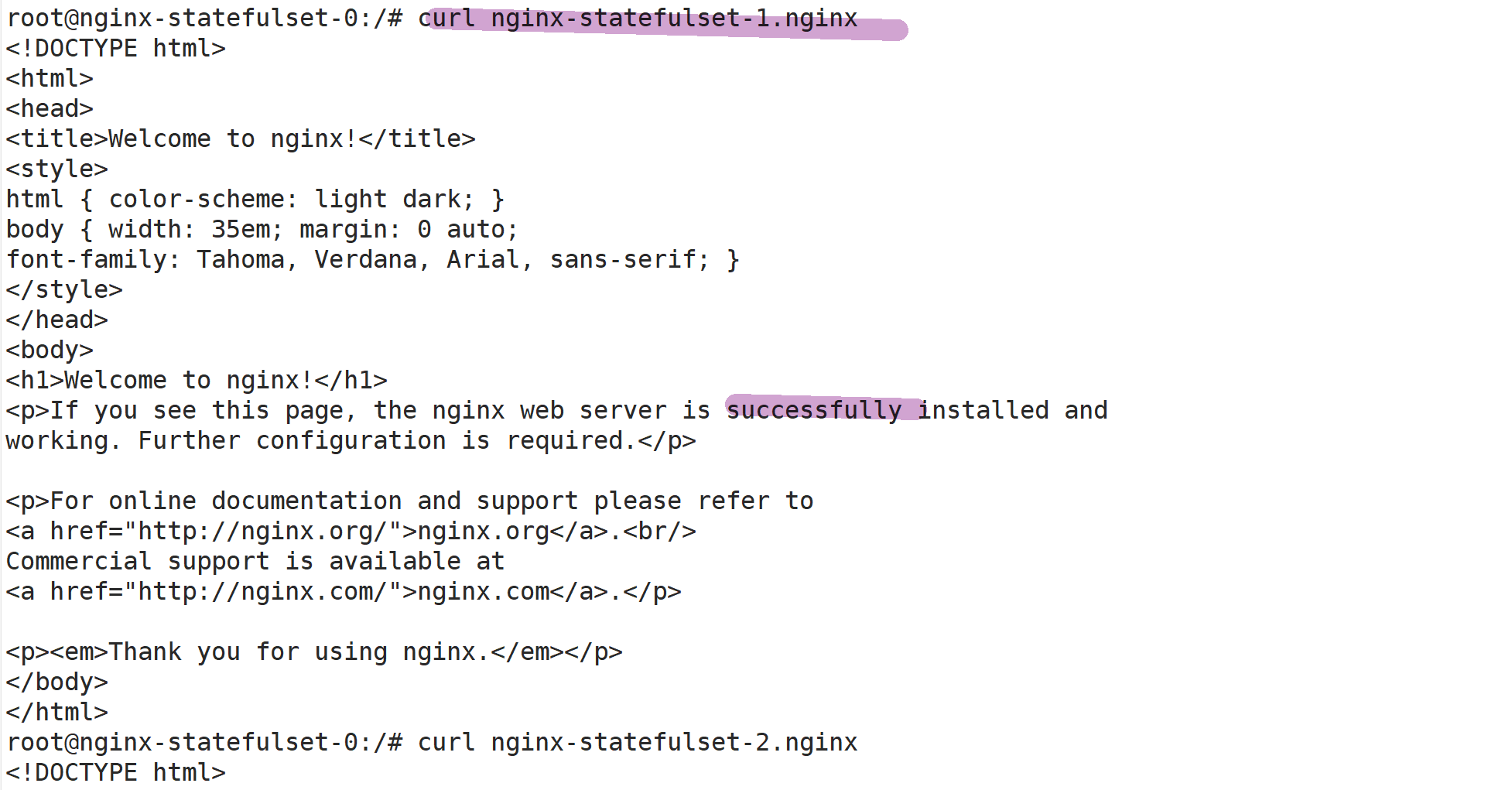

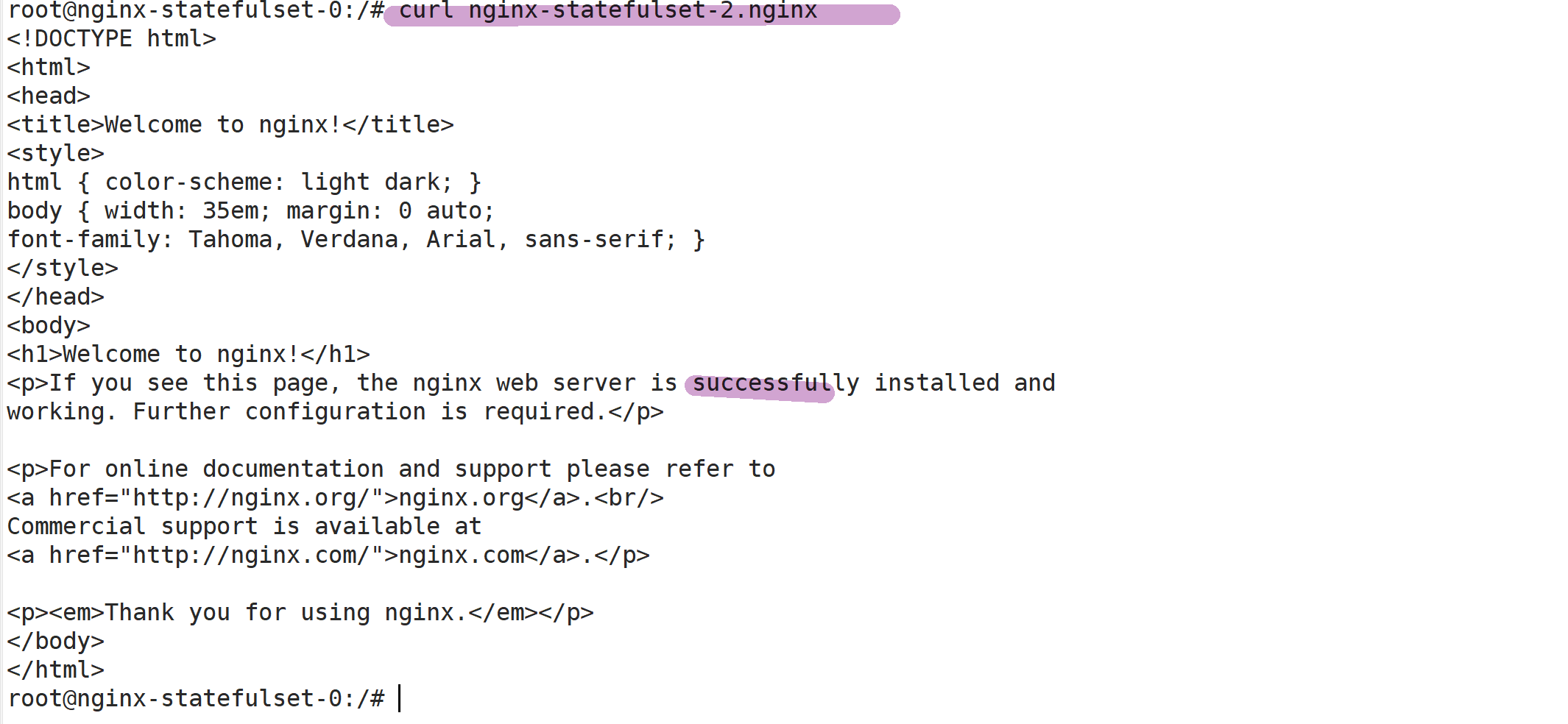

vim pod6.yaml apiVersion: v1kind: Podmetadata: name: dns-testspec: containers: - name: busybox image: busybox:1.28.4 args: - /bin/sh - -c - sleep 36000 restartPolicy: Nevervim sts.yamlapiVersion: v1kind: Servicemetadata: name: nginx labels: app: nginxspec: ports: - port: 80 name: web clusterIP: None selector: app: nginx---apiVersion: apps/v1 kind: StatefulSet metadata: name: nginx-statefulset namespace: defaultspec: serviceName: nginx replicas: 3 selector: matchLabels: app: nginx template: metadata: labels: app: nginx spec: containers: - name: nginx image: nginx:latest ports: - containerPort: 80 kubectl apply -f sts.yamlkubectl apply -f pod6.yamlkubectl get pods,svckubectl exec -it dns-test sh/ # nslookup nginx-statefulset-0.nginx.default.svc.cluster.local/ # nslookup nginx-statefulset-1.nginx.default.svc.cluster.local/ # nslookup nginx-statefulset-2.nginx.default.svc.cluster.localkubectl exec -it nginx-statefulset-0 bash/# curl nginx-statefulset-0.nginx/# curl nginx-statefulset-1.nginx/# curl nginx-statefulset-2.nginx

测试:

kubectl exec -it dns-test sh

kubectl exec -it nginx-statefulset-0 bash

//扩展伸缩

kubectl scale sts myapp --replicas=4 #扩容副本增加到4个kubectl get pods -w #动态查看扩容kubectl get pv #查看pv绑定kubectl patch sts myapp -p '{"spec":{"replicas":2}}' #打补丁方式缩容kubectl get pods -w #动态查看缩容

四、DaemonSet

DaemonSet 确保全部(或者一些)Node 上运行一个 Pod 的副本。当有 Node 加入集群时,也会为他们新增一个 Pod 。当有 Node 从集群移除时,这些 Pod 也会被回收。删除 DaemonSet 将会删除它创建的所有 Pod。

使用 DaemonSet 的一些典型用法:

●运行集群存储 daemon,例如在每个 Node 上运行 glusterd、ceph。

●在每个 Node 上运行日志收集 daemon,例如fluentd、logstash。

●在每个 Node 上运行监控 daemon,例如 Prometheus Node Exporter、collectd、Datadog 代理、New Relic 代理,或 Ganglia gmond。

应用场景:Agent//官方案例(监控)

https://kubernetes.io/docs/concepts/workloads/controllers/daemonset/

示例:

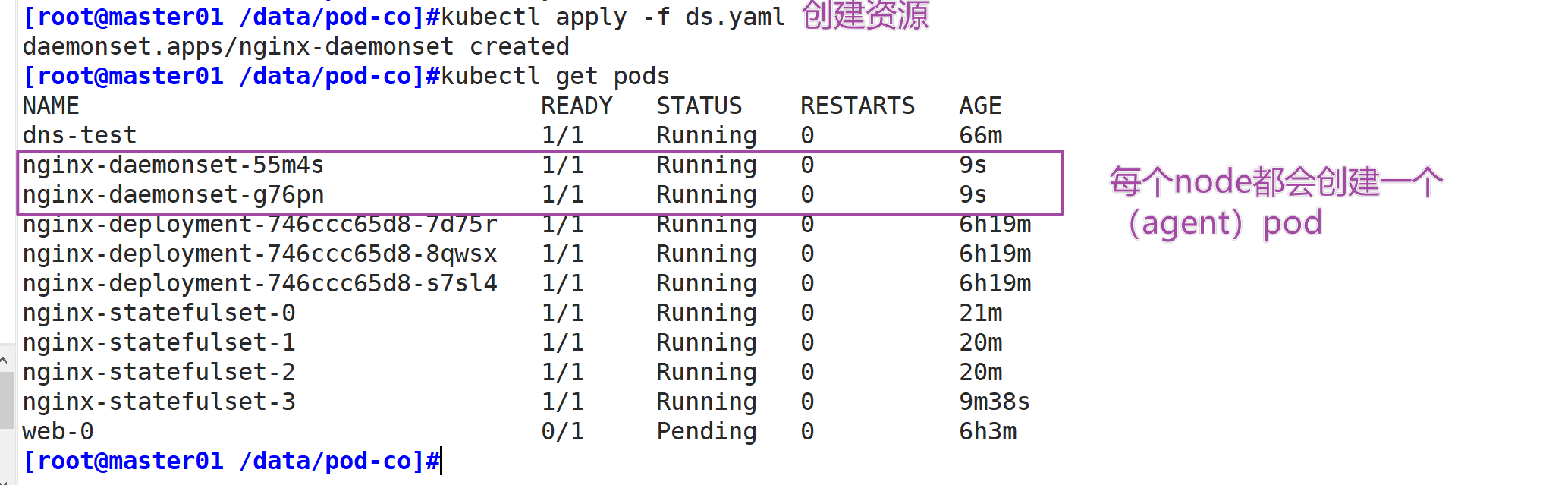

vim ds.yaml apiVersion: apps/v1kind: DaemonSet metadata: name: nginx-daemonSet labels: app: nginxspec: selector: matchLabels: app: nginx template: metadata: labels: app: nginx spec: containers: - name: nginx image: nginx:1.15.4 ports: - containerPort: 80kubectl apply -f ds.yaml//DaemonSet会在每个node节点都创建一个Podkubectl get podsnginx-deployment-4kr6h 1/1 Running 0 35snginx-deployment-8jrg5 1/1 Running 0 35s

五、Job

Job分为普通任务(Job)和定时任务(CronJob)

常用于运行那些仅需要执行一次的任务

应用场景:数据库迁移、批处理脚本、kube-bench扫描、离线数据处理,视频解码等业务

官网:

https://kubernetes.io/docs/concepts/workloads/controllers/jobs-run-to-completion/

示例:

vim job.yamlapiVersion: batch/v1kind: Jobmetadata: name: pispec: template: spec: containers: - name: pi image: perl command: ["perl", "-Mbignum=bpi", "-wle", "print bpi(2000)"] restartPolicy: Never backoffLimit: 4//参数解释.spec.template.spec.restartPolicy该属性拥有三个候选值:OnFailure,Never和Always。默认值为Always。它主要用于描述Pod内容器的重启策略。在Job中只能将此属性设置为OnFailure或Never,否则Job将不间断运行。.spec.backoffLimit用于设置job失败后进行重试的次数,默认值为6。默认情况下,除非Pod失败或容器异常退出,Job任务将不间断的重试,此时Job遵循 .spec.backoffLimit上述说明。一旦.spec.backoffLimit达到,作业将被标记为失败。//在所有node节点下载perl镜像,因为镜像比较大,所以建议提前下载好docker pull perlkubectl apply -f job.yaml kubectl get podspi-bqtf7 0/1 Completed 0 41s//结果输出到控制台kubectl logs pi-bqtf73.14159265......//清除job资源kubectl delete -f job.yaml

//backoffLimit

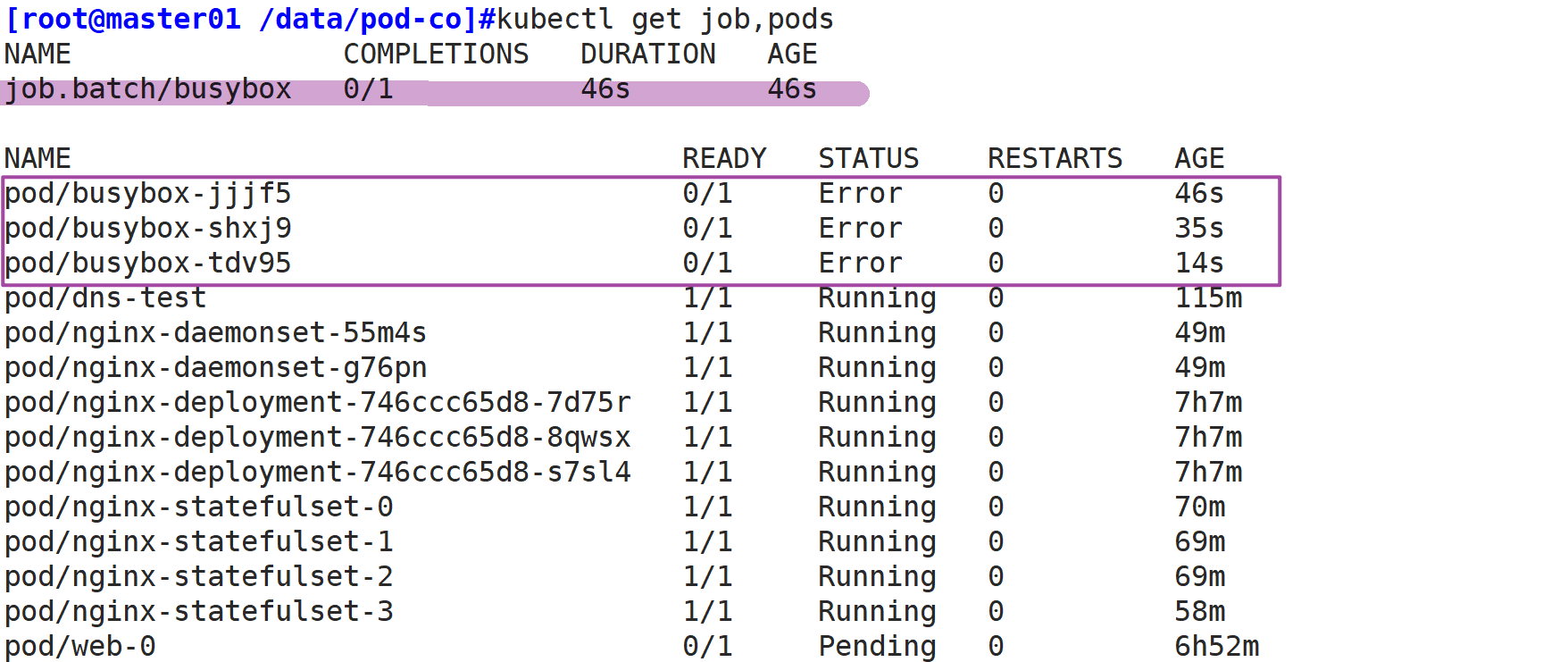

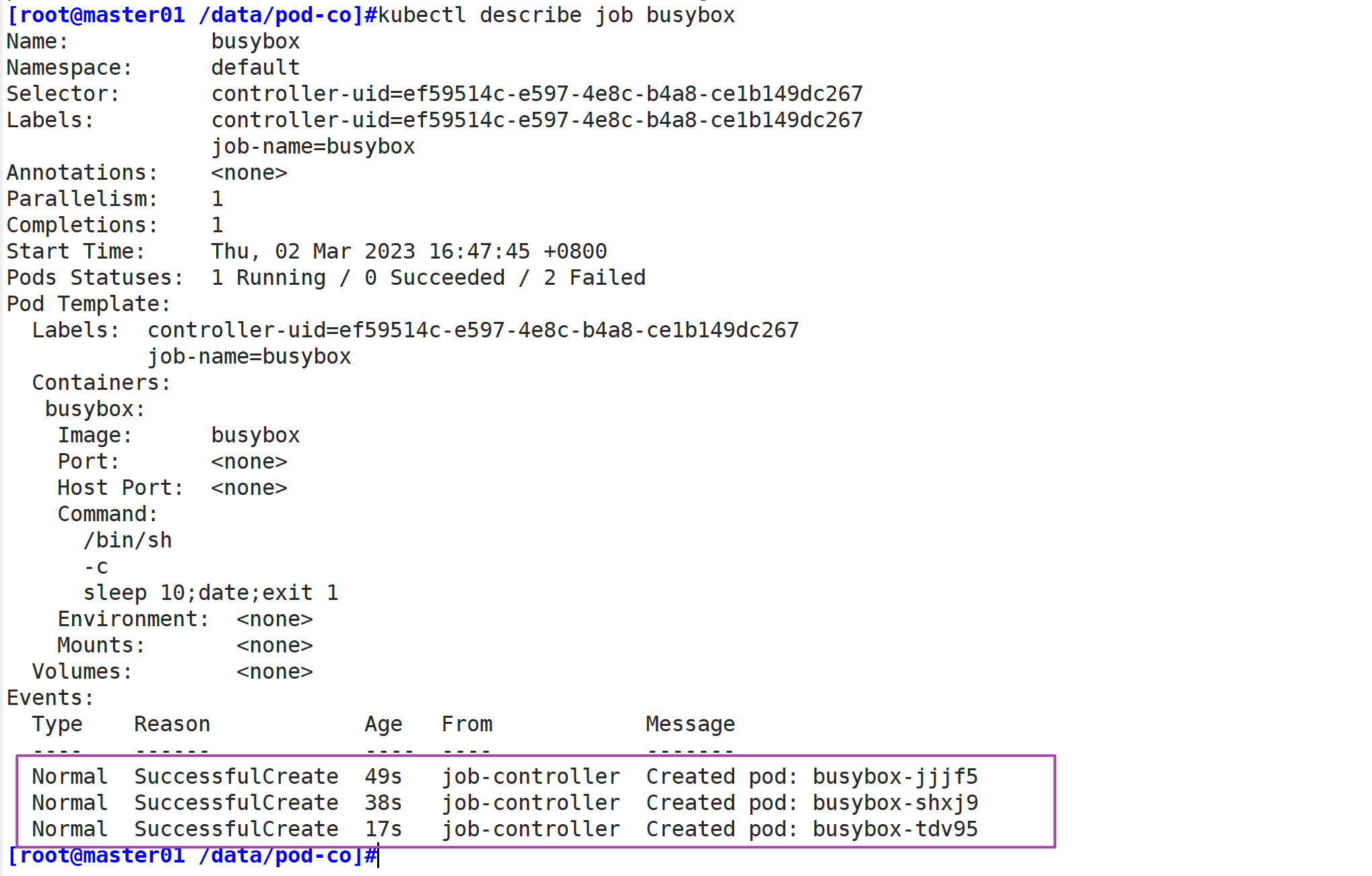

vim job-limit.yamlapiVersion: batch/v1kind: Jobmetadata: name: busyboxspec: template: spec: containers: - name: busybox image: busybox imagePullPolicy: IfNotPresent command: ["/bin/sh", "-c", "sleep 10;date;exit 1"] restartPolicy: Never backoffLimit: 2 kubectl apply -f job-limit.yamlkubectl get job,podsNAME COMPLETIONS DURATION AGEjob.batch/busybox 0/1 4m34s 4m34sNAME READY STATUS RESTARTS AGEpod/busybox-dhrkt 0/1 Error 0 4m34spod/busybox-kcx46 0/1 Error 0 4mpod/busybox-tlk48 0/1 Error 0 4m21skubectl describe job busybox......Warning BackoffLimitExceeded 43s job-controller Job has reached the specified backoff limit

六、CronJob

周期性任务,像Linux的Crontab一样。

周期性任务

应用场景:通知,备份

官网:

https://kubernetes.io/docs/tasks/job/automated-tasks-with-cron-jobs/

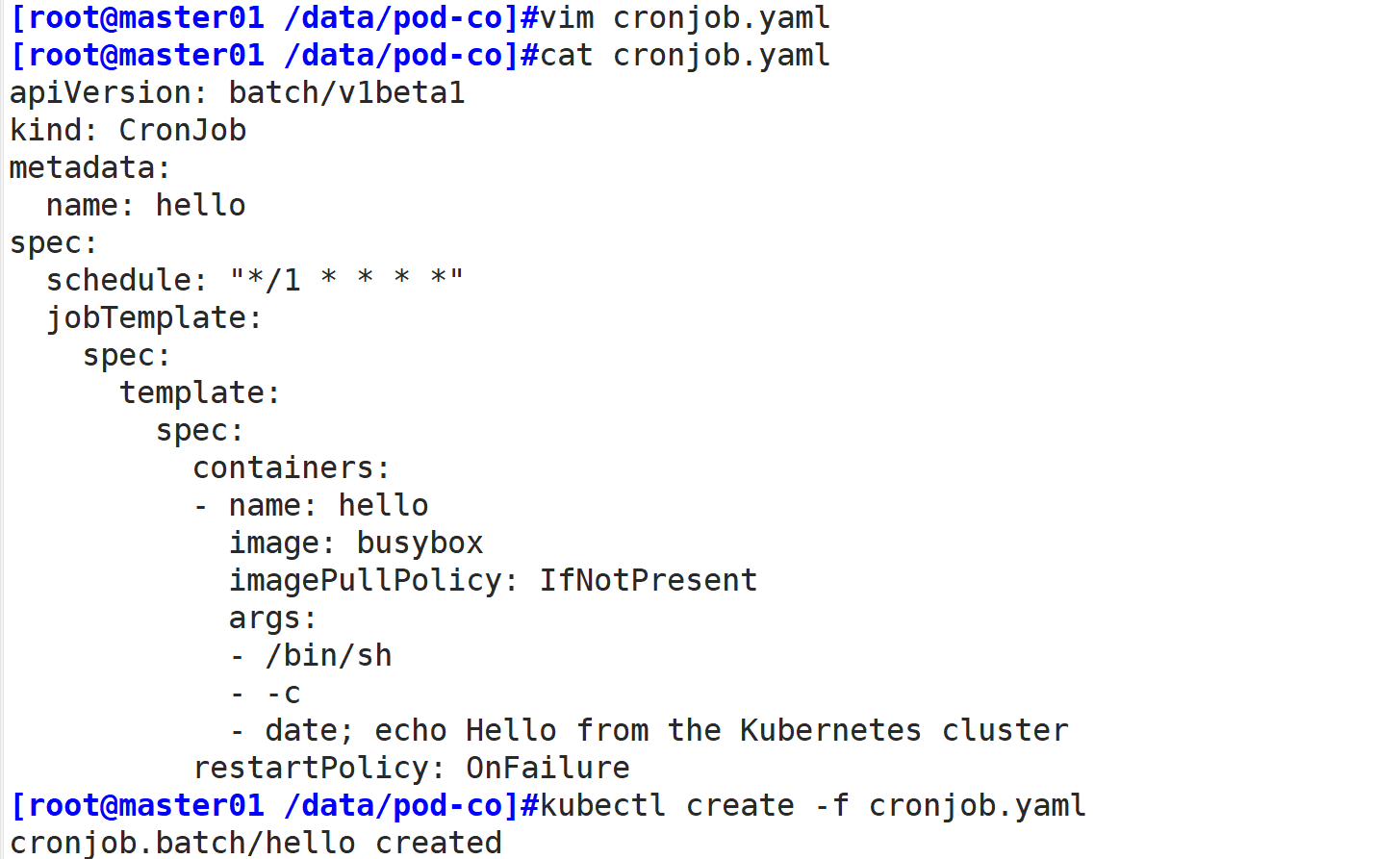

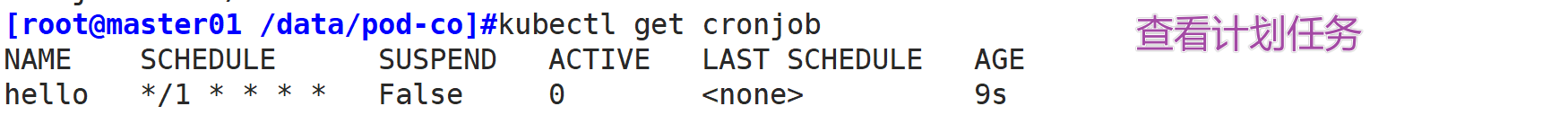

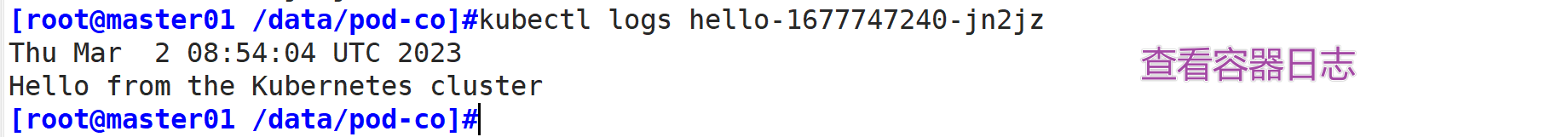

示例:

//每分钟打印hellovim cronjob.yamlapiVersion: batch/v1beta1kind: CronJobmetadata: name: hellospec: schedule: "*/1 * * * *" jobTemplate: spec: template: spec: containers: - name: hello image: busybox imagePullPolicy: IfNotPresent args: - /bin/sh - -c - date; echo Hello from the Kubernetes cluster restartPolicy: OnFailure //cronjob其它可用参数的配置spec: concurrencyPolicy: Allow #要保留的失败的完成作业数(默认为1) schedule: '*/1 * * * *' #作业时间表。在此示例中,作业将每分钟运行一次 startingDeadlineSeconds: 15 #pod必须在规定时间后的15秒内开始执行,若超过该时间未执行,则任务将不运行,且标记失败 successfulJobsHistoryLimit: 3 #要保留的成功完成的作业数(默认为3) terminationGracePeriodSeconds: 30 #job存活时间 默认不设置为永久 jobTemplate: #作业模板。这类似于工作示例kubectl create -f cronjob.yaml kubectl get cronjobNAME SCHEDULE SUSPEND ACTIVE LAST SCHEDULE AGEhello */1 * * * * False 0 25skubectl get podsNAME READY STATUS RESTARTS AGEhello-1621587180-mffj6 0/1 Completed 0 3mhello-1621587240-g68w4 0/1 Completed 0 2mhello-1621587300-vmkqg 0/1 Completed 0 60skubectl logs hello-1621587180-mffj6Fri May 21 09:03:14 UTC 2021Hello from the Kubernetes cluster//如果报错:Error from server (Forbidden): Forbidden (user=system:anonymous, verb=get, resource=nodes, subresource=proxy) ( pods/log hello-1621587780-c7v54)//解决办法:绑定一个cluster-admin的权限kubectl create clusterrolebinding system:anonymous --clusterrole=cluster-admin --user=system:anonymous